A Radically Modern Approach to Introductory Physics

Second Edition

Volume 2: Four Forces

David J. Raymond

The New Mexico Tech Press

Socorro, New Mexico, USA

A Radically Modern Approach to Introductory Physics Second Edition

Volume 2: Four Forces

David J. Raymond

Copyright © 2012, 2015 David J. Raymond

Second Edition

Original state, 14 December 2015

Content of this book available under the Creative Commons Attribution-Noncommercial-Share-Alike License. See http://creativecommons.org/licenses/by-nc-sa/3.0/ for details.

Publisher’s Cataloging-in-Publication Data

OCLC Number: 932065971 (print) – 932066146 (ebook)

Published by The New Mexico Tech Press, a New Mexico nonprofit corporation

This copy printed by CreateSpace, Charleston, SC

Printed in the United States of America

The New Mexico Tech Press

Socorro, New Mexico, USA

http://press.nmt.edu

To my wife Georgia and my daughters Maria and Elizabeth.

The idea for a “radically modern” introductory physics course arose out of frustration in the physics department at New Mexico Tech with the standard two-semester treatment of the subject. It is basically impossible to incorporate a significant amount of “modern physics” (meaning post-19th century!) in that format. It seemed to us that largely skipping the “interesting stuff” that has transpired since the days of Einstein and Bohr was like teaching biology without any reference to DNA. We felt at the time (and still feel) that an introductory physics course for non-majors should make an attempt to cover the great accomplishments of physics in the 20th century, since they form such an important part of our scientific culture.

It would, of course, be easy to pander to students – teach them superficially about the things they find interesting, while skipping the “hard stuff”. However, I am convinced that they would ultimately find such an approach as unsatisfying as would the educated physicist. What was needed was a unifying vision which allowed the presentation of all of physics from a modern point of view.

The idea for this course came from reading Louis de Broglie’s Nobel Prize address.1 De Broglie’s work is a masterpiece based on the principles of optics and special relativity, which qualitatively foresees the path taken by Schrödinger and others in the development of quantum mechanics. It thus dawned on me that perhaps optics and waves together with relativity could form a better foundation for all of physics, providing a more interesting way into the subject than classical mechanics.

Whether this is so or not is still a matter of debate, but it is indisputable that such a path is much more fascinating to most college students interested in pursuing studies in physics — especially those who have been through the usual high school treatment of classical mechanics. I am also convinced that the development of physics in these terms, though not historical, is at least as rigorous and coherent as the classical approach.

After 15 years of gradual development, it is clear that the course failed in its original purpose, as a replacement for the standard, one-year introductory physics course with calculus. The material is way too challenging, given the level of interest of the typical non-physics student. However, the course has found a niche at the sophomore level for physics majors (and occasional non-majors with a special interest in physics) to explore some of the ideas that drew them to physics in the first place. It was placed at the sophomore level because we found that having some background in both calculus and introductory college-level physics is advantageous for most students. However, we allow incoming freshmen into the course if they have an appropriate high school background in physics and math.

The course is tightly structured, and it contains little or nothing that can be omitted. However, it is designed to fit into two semesters or three quarters. In broad outline form, the structure is as follows:

A few words about how I have taught the course at New Mexico Tech are in order. As with our standard course, each week contains three lecture hours and a two-hour recitation. The recitation is the key to making the course accessible to the students. I generally have small groups of students working on assigned homework problems during recitation while I wander around giving hints. After all groups have completed their work, a representative from each group explains their problem to the class. The students are then required to write up the problems on their own and hand them in at a later date. The problems are the key to student learning, and associating course credit with the successful solution of these problems insures virtually 100% attendance in recitation.

In addition, chapter reading summaries are required, with the students urged to ask questions about material in the text that gave them difficulties. Significant lecture time is taken up answering these questions. Students tend to do the summaries, as they also count for their grade. The summaries and the questions posed by the students have been quite helpful to me in indicating parts of the text which need clarification.

The writing style of the text is quite terse. This partially reflects its origin in a set of lecture notes, but it also focuses the students’ attention on what is really important. Given this structure, a knowledgeable instructor able to offer one-on-one time with students (as in our recitation sections) is essential for student success. The text is most likely to be useful in a sophomore-level course introducing physics majors to the broad world of physics viewed from a modern perspective.

I freely acknowledge stealing ideas from Edwin Taylor, John Archibald Wheeler, Thomas Moore, Robert Mills, Bruce Sherwood, and many other creative physicists, and I owe a great debt to them. The physics department at New Mexico Tech has been quite supportive of my efforts over the years relative to this course, for which I am exceedingly grateful. Finally, my humble thanks go out to the students who have enthusiastically (or on occasion unenthusiastically) responded to this course. It is much, much better as a result of their input.

My colleagues Alan Blyth, David Westpfahl, Ken Eack, and Sharon Sessions were brave enough to teach this course at various stages of its development, and I welcome the feedback I have received from them. Their experience shows that even seasoned physics teachers require time and effort to come to grips with the content of this textbook!

The reviews of Allan Stavely and Paul Arendt in conjunction with the publication of this book by the New Mexico Tech Press have been enormously helpful, and I am very thankful for their support and enthusiasm. Penny Bencomo and Keegan Livoti taught me a lot about written English with their copy editing.

David J. Raymond

Aside from numerous corrections, clarifications, and minor enhancements, the main additions to this edition include the following:

As in the first edition, I am greatful for the reviews of Paul Arendt and Allan Stavely, who always manage to catch things that I have overlooked.

David J. Raymond

Physics Department

New Mexico Tech

Socorro, NM, USA

djraymondnm@gmail.com

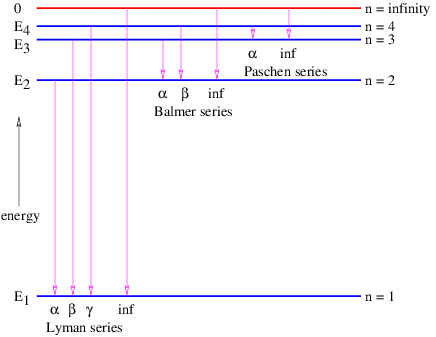

In this chapter we study the law that governs gravitational forces between massive bodies. We first introduce the law and then explore its consequences. The notion of a test mass and the gravitational field is developed, followed by the idea of gravitational flux. We then learn how to compute the gravitational field from more than one mass, and in particular from extended bodies with spherical symmetry. We finally examine Kepler’s laws and learn how these laws and the conservation laws for energy and angular momentum may be used to solve problems in orbital dynamics.

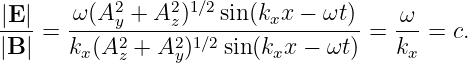

Of Newton’s accomplishments, the discovery of the universal law of gravitation ranks as one of the greatest. Imagine two masses, M1 and M2, separated by a distance r. The force has the magnitude

| (13.1) |

where G = 6.67 × 10-11 m3 kg-1 s-2 is the universal gravitational constant. The gravitational force is always attractive and it acts along the line of centers between the two masses.

The gravitational field at any point is equal to the gravitational force on some test mass placed at that point divided by the mass of the test mass. The dimensions of the gravitational field are length over time squared, which is the same as acceleration. For a single point mass M (other than the test mass), Newton’s law of gravitation tells us that

| (13.2) |

where r is the position of the test point relative to the mass M. Note that we have written this equation in vector form, reflecting the fact that the gravitational field is a vector. Thus, r = xtest - xmass, where xtest and xmass are the position vectors of the test point and the mass M. The vector r points from the mass to the test point. The quantity r = |r| is the distance from the mass to the test point.

____________________________

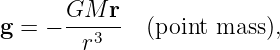

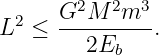

If there is more than one mass, then the total gravitational field at a test point is obtained by computing the individual fields produced by each mass at the test point and vectorially adding these fields. This process is schematically illustrated in figure 13.1.

____________________________

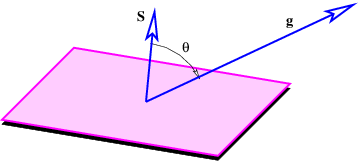

The next concept we need to discuss is the gravitational flux. Figure 13.2 shows a rectangular area S with a vector S perpendicular to the rectangle. The vector S is defined to have length S, so it is a compact way of representing the size and orientation of a rectangle in three dimensional space. The vector S could point either upward or downward, and the choice of directions turns out to be important. This is why we say that S represents a directed area.

Figure 13.2 also shows a vector g, representing the gravitational field on the surface of the rectangle. Its value is assumed here not to vary with position on the rectangle. The angle θ is the angle between the vectors S and g.

____________________________

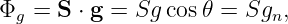

The gravitational flux through the rectangle is defined as

| (13.3) |

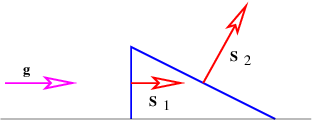

where gn = g cos θ is the component of g normal to the rectangle. The flux is thus larger for larger areas and for larger gravitational fields. However, only the component of the gravitational field normal to the rectangle (i. e., parallel to S) counts in this calculation. A consequence is that the gravitational flux through area 1, S1 ⋅ g, in figure 13.3 is the same as the flux through area 2, S2 ⋅ g.

The significance of the directedness of the area is now clear. If the vector S pointed in the opposite direction, the flux would have the opposite sign. When defining the flux through a rectangle, it is necessary to define which way the flux is going. This is what the direction of S does — a positive flux is defined as going from the side opposite S to the side of S.

An analogy with flowing water may be helpful. Imagine a rectangular channel of cross-sectional area S through which water is flowing at velocity v. The flux of water through the channel, which is defined as the volume of water per unit time passing through the cross-sectional area, is Φw = vS. The water velocity takes the place of the gravitational field in this case, and its direction is here assumed to be normal to the rectangular cross-section of the channel. The field thus expresses an intensity (e. g., the velocity of the water or the strength of the gravitational field), while the flux expresses an amount (the volume of water per unit time in the fluid dynamical case). The gravitational flux is thus the amount of some gravitational influence, while the gravitational field is its strength. We now try to more clearly understand to what this amount really refers.

We need to briefly consider the case in which the gravitational field varies from one point to another on the rectangular surface. In this case a proper calculation of the flux through the surface cannot be made using equation (13.3) directly. Instead, we must break the surface into a grid of sub-surfaces. If the grid is sufficiently fine, the gravitational field will be nearly constant over each sub-surface and equation (13.3) can be applied separately to each of these. The total flux is then the sum of all the individual fluxes.

____________________________

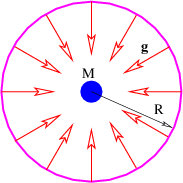

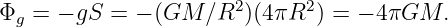

There is actually no need for the area in figure 13.2 to be rectangular. We can calculate the gravitational flux through the surface of a sphere of radius R with a mass M at the center. As illustrated in figure 13.4, the gravitational field points inward toward the mass. It has magnitude g = GM∕R2, so if we desire to calculate the gravitational flux out of the sphere, we must introduce a minus sign. Finally, the area of a sphere of radius R is S = 4πR2, so the flux is

| (13.4) |

Notice that this flux doesn’t depend on how big the sphere is — the factor of R2 in the area cancels with the factor of 1∕R2 in the gravitational field. This is a hint that something profound is going on. The size of the surface enclosing the mass is unimportant, and neither is its shape — the answer is always the same — the gravitational flux outward through any closed surface surrounding a mass M is just Φg = -4πGM! This is an example of Gauss’s law applied to gravity.

____________________________

It is possible to formally prove this result using arguments like those posed in figure 13.3, but perhaps the easiest way to understand this result is via the analogy with the flow of water. If we think of the mass as something which destroys water at a certain rate, then there must be an inward flow of water through the surfaces in the left and center examples in figure 13.5. Furthermore, the volume of water per unit time flowing inward through these surfaces is the same in the two examples, because the rate at which water is being destroyed is the same. In the right case the mass is not contained inside the surface and though water flows into the volume bounded by the surface, it also flows out the other side, resulting in a net outward (or inward) volume flux through the surface of zero.

____________________________

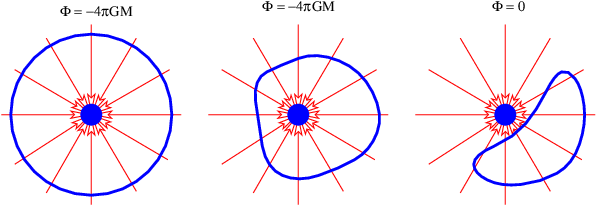

Gauss’s law extends trivially to more than one mass. As figure 13.6 shows, the outward flux through a closed surface is just

| (13.5) |

In other words, all masses inside the closed surface contribute to the flux, while no masses outside the surface contribute. This is the most general statement of Gauss’s law as it applies to gravity.

An important application of Gauss’s law is to show that the gravitational field outside of a spherically symmetric extended mass M is exactly the same as if all the mass were concentrated at a point at the center of the sphere. The proof goes as follows: Imagine a sphere concentric with the center of the extended mass, but with larger radius. The gravitational flux from the mass is just Φg = -4πGM as before. However, because of the assumed spherical symmetry, we know that the gravitational field points normally inward at every point on the spherical surface and is equal in magnitude everywhere on the sphere. Thus we can infer that Φg = -4πR2g, where R is the radius of the sphere and g is the magnitude of the gravitational field at radius R. From these two equations we immediately infer that the field magnitude is

| (13.6) |

Expressing this in vector form for arbitrary radius r, and remembering that the gravitational field points inward, we find that

| (13.7) |

which is precisely the equation for g resulting from a point mass M. Recall that r points from the mass to the test point.

So far our discussion of gravity has been completely non-relativistic. We will not explore in detail how the theory of gravity changes in a completely relativistic treatment. As we noted earlier in the course, Einstein’s general theory of relativity covers this, and the mathematics are formidable. We confine ourselves to two comments:

____________________________

One potentially observable prediction of relativity is the existence of gravitational waves. Imagine two stars revolving around each other. The gravitational field from these stars will change periodically due to this motion. However, this change propagates outward only at the speed of light. As a result, ripples in the field, or gravitational waves, spread outward from the revolving stars. Efforts are currently under way to develop apparatus to detect gravitational waves produced by violent cosmic events such as the explosion of a supernova.

Johannes Kepler, using data compiled by Tycho Brahe, inferred three laws governing the motions of planets in the solar system:

These laws were instrumental in the development of modern mechanics and the universal law of gravitation by Isaac Newton.

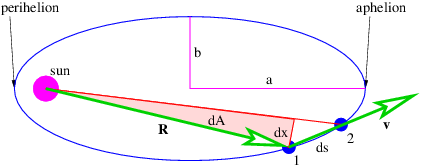

Showing that the first law is consistent with Newtonian mechanics is mathematically more difficult than we can undertake in this course. However, the second law turns out to be a simple consequence of the conservation of angular momentum. Figure 13.7 shows an elliptical orbit with the area swept out as a planet moves from position 1 to position 2. We estimate this area as dA = Rdx∕2, where we have ignored the small unshaded part of the area to the right of the shaded triangle. The distance traveled by the planet in time dt is ds, so the magnitude of the velocity is v = ds∕dt. However, in computing the angular momentum, we need the tangential component of the velocity, i. e., the component normal to the radius vector R. This is simply vt = dx∕dt. The angular momentum is L = mRvt = mRdx∕dt, where m is the mass of the planet. Combining this with the formula for dA results in

| (13.8) |

Since gravitation is a central force, angular momentum is conserved, which means that dA∕dt is constant. Thus, we have shown that conservation of angular momentum is equivalent to Kepler’s second law.

Kepler’s third law turns out to be a consequence of the universal law of gravitation. We can prove this for circular orbits. We know that a planet moving in a circular orbit around the sun is accelerating toward the sun with the centripetal acceleration a = v2∕R, where v is the speed of the planet’s motion in its orbit and R is the orbit’s radius. This acceleration is caused by the gravitational force, so we can equate the force divided by the planetary mass to a, resulting in

| (13.9) |

where M is the mass of the sun. This may be solved for v:

| (13.10) |

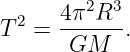

Eliminating v in favor of the period of revolution T = 2πR∕v results in

| (13.11) |

This agrees with Kepler’s third law since the semi-major axis of a circle is simply the radius R.

The gravitational force is conservative, so two point masses M and m separated by a distance r have a potential energy:

| (13.12) |

It is easily verified that differentiation recovers the gravitational force.

____________________________

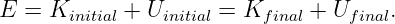

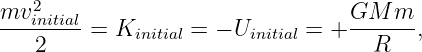

The conservation of energy and angular momentum in planetary motions can be used to solve many practical problems involving motion under the influence of gravity. For instance, suppose a bullet is shot straight upward from the surface of the moon. One might ask what initial velocity is needed to insure that the bullet will escape from the gravity of the moon. Since total energy E is conserved, the sum of the initial kinetic and potential energies must equal the sum of the final kinetic and potential energies:

| (13.13) |

For the bullet to escape the moon, its kinetic energy must remain positive no matter how far it gets from the moon. Since the potential energy is always negative, asymptoting to zero at infinite distance (i. e., Ufinal = 0), the minimum total energy consistent with this condition is zero. For zero total energy we have

| (13.14) |

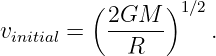

where m is the mass of the bullet, M is the mass of the moon, R is the radius of the moon, and vinitial is the minimum initial velocity required for the bullet to escape. Solving for vinitial yields

| (13.15) |

This is called the escape velocity. Notice that the escape velocity from a given radius is a factor of 21∕2 larger than the velocity needed for a circular orbit at that radius (see equation (13.10)).

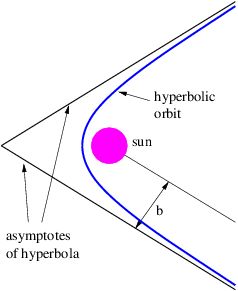

An object is energetically bound to the sun if its kinetic plus potential energy is less than zero. In this case the object follows an elliptical orbit around the sun as shown by Kepler. However, if the kinetic plus potential energy is zero, the object follows a parabolic orbit, and if it is greater than zero, a hyperbolic orbit results. In the latter two cases the sun also resides at a focus of the parabola or hyperbola. Figure 13.8 shows a typical hyperbolic orbit. The impact parameter, defined in this figure, is the closest the object would have come to the center of the sun if it hadn’t been deflected by gravity.

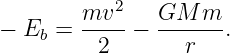

Sometimes energy and angular momentum conservation can be used together to solve problems. For instance, suppose we know the energy and angular momentum of an asteroid of mass m and we wish to infer the maximum and minimum distances of the asteroid from the sun, the so-called aphelion and perihelion distances. Since the asteroid is gravitationally bound to the sun, it is convenient to characterize the total energy by Eb = -E, the so-called binding energy. If v is the orbital speed of the asteroid and r is its distance from the sun, then the binding energy can be written in terms of the kinetic and potential energies:

| (13.16) |

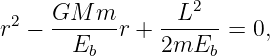

The magnitude of the angular momentum of the asteroid is L = mvtr, where vt is the tangential component of the asteroid’s velocity. At aphelion and perihelion, the radial part of the velocity of the asteroid is zero and the speed equals the tangential component of the velocity, v = vt. Thus, at aphelion and perihelion we can eliminate v in favor of the angular momentum:

| (13.17) |

This can be rearranged into a quadratic equation

| (13.18) |

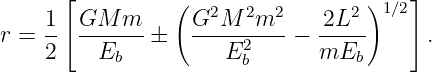

which can be solved to yield

| (13.19) |

The larger of the two solutions yields the aphelion value of the radius while the smaller yields the perihelion.

Equation (13.19) tells us something else interesting. The quantity inside the square root cannot be negative, which means that we must have

| (13.20) |

In other words, for a given value of the binding energy Eb there is a maximum value for the angular momentum. This maximum value makes the square root zero, which means that the aphelion and the perihelion are the same — i. e., the orbit is circular. Thus, among all orbits with a given binding energy, the circular orbit has the maximum angular momentum.

_____________________________________

In this chapter we ask an apparently simple question: How can the idea of potential energy be extended to the relativistic case? The answer to this question is unexpectedly complex, but it leads us to immensely fruitful results. In particular, it prompts us to investigate the idea of potential momentum, which results ultimately in gauge theory, of which electromagnetism is an example.

Along the way we show that conservation of four-momentum has an unexpected consequence — the idea of force at a distance is inconsistent with the theory of relativity. This means that momentum and energy must be carried between interacting particles by another type of particle that we call an intermediary particle. These particles are virtual in the sense that they don’t have their real-world mass when acting in this role.

In relativistic quantum mechanics, we find that particles can take on negative energies. Feynman’s interpretation of this fact is discussed, which leads us to a model for antiparticles.

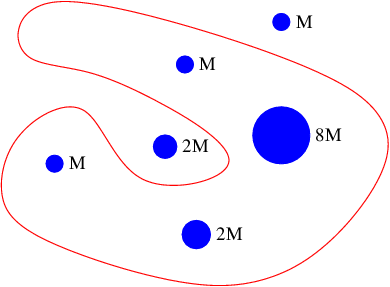

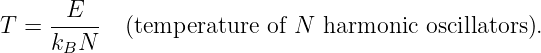

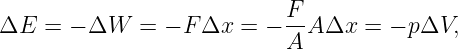

For a free, non-relativistic particle of mass m, the total energy E equals the kinetic energy K and is related to the momentum Π of the particle by

| (14.1) |

(Note that we have ignored the contribution of the rest energy to the total energy here.) In the non-relativistic case, the momentum is Π = mv, where v is the particle velocity.

If the particle is not free, but is subject to forces associated with a potential energy U(x,y,z), then equation (14.1) must be modified to account for the contribution of U to the total energy:

| (14.2) |

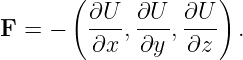

The force on the particle is related to the potential energy by

| (14.3) |

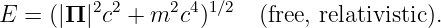

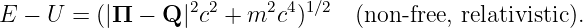

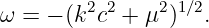

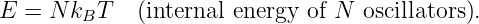

For a free, relativistic particle, we have

| (14.4) |

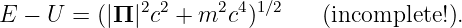

The obvious way to add forces to the relativistic case is by rewriting equation (14.4) with a potential energy, in analogy with equation (14.2):

| (14.5) |

Unfortunately, equation (14.5) is incomplete, because we have subtracted U from the energy E without subtracting a corresponding term from the momentum Π as well. However, Π = (Π,E∕c) is a four-vector, so an equation with something subtracted from just one of the components of this four-vector is not relativistically invariant. In other words, equation (14.5) doesn’t obey the principle of relativity, and therefore cannot be correct!

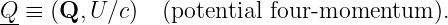

How can we fix this problem? One way is to define a new four-vector with U∕c being its timelike part and some new vector Q being its spacelike part:

| (14.6) |

We then subtract Q from the momentum Π. When we do this, equation (14.5) becomes

| (14.7) |

The quantity Q is called the potential momentum and Q is the potential four-momentum.

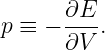

Some additional terminology is useful. We define

| (14.8) |

as the kinetic momentum for reasons discussed below. In order to avoid confusion, we rename Π the total momentum.1 Thus, the total momentum equals the kinetic plus the potential momentum, in analogy with energy.

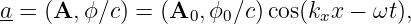

So far, we have shown that the introduction of a potential momentum complements the potential energy so as to make the energy-momentum relationship for a particle relativistically invariant. However, we as yet have no idea what causes potential momentum nor what it does to the affected particle. We shall put off answering the former question and address only the latter at this point. A hint comes from the corresponding behavior of energy. The total energy of a particle is related to the quantum mechanical frequency ω of the particle, and the total momentum is related to its wave vector k:

| (14.9) |

However, the kinetic energy2 and the kinetic momentum are related to the particle’s velocity v:

| (14.10) |

where v = |v|.

The relationship between kinetic momentum and velocity can be proven by dividing equation (14.7) by ℏ to obtain a dispersion relation and then computing the group velocity, which we equate to the particle velocity. However, we will not do this here.

____________________________

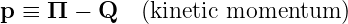

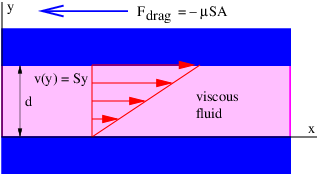

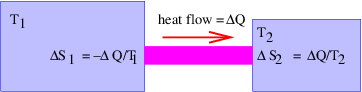

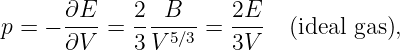

Let us now study a phenomenon that depends on the existence of potential momentum. If the potential energy of a particle is zero and both the kinetic and potential momenta point in the ±x direction, the total energy equation (14.7) for the particle becomes

![E = [(Π - Q )2c2 + m2c4 ]1∕2 = (p2c2 + m2c4 )1∕2.](book230x.png) | (14.11) |

Since its total energy E is conserved, the magnitude of the kinetic momentum p of the particle doesn’t change according to the above equation. Thus, if a region of non-zero potential momentum is encountered, the total momentum of the particle must change so as to keep the kinetic momentum constant. This results in a change in the wavelength of the matter wave associated with the particle. In particular, if the potential momentum points in the same direction as the kinetic momentum, the total momentum is increased and the wavelength decreases, while a potential momentum pointing in the direction opposite the kinetic momentum results in an increase in wavelength.

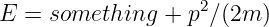

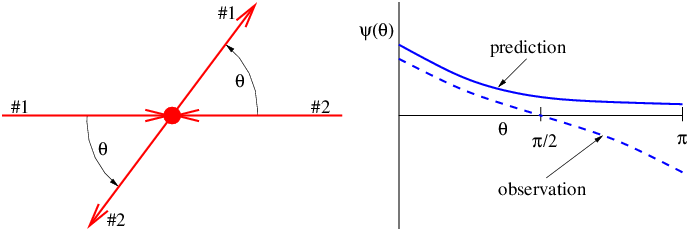

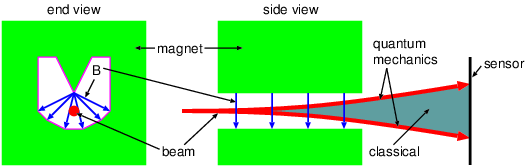

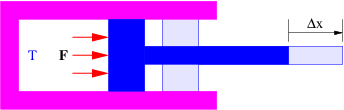

Figure 14.1 illustrates what might happen to a particle moving through a channel that splits into two sub-channels for an interval. If we arrange to have non-zero potential momenta pointing in opposite directions in the sub-channels, the wavelength of the particle will be different in the two regions. At the end of the interval, the waves recombine, interfering constructively or destructively, depending on the magnitude of the phase difference between them. If destructive interference occurs, then the particle cannot pass. The potential momentum thus acts as a valve controlling the flow of particles through the channel. This is an example of the Aharonov-Bohm effect.

In the Aharonov-Bohm effect, the potential momentum didn’t result in any force on the particle — its only manifestation was to change the particle’s wavelength. In such situations the potential momentum’s presence is only revealed by quantum mechanical effects.

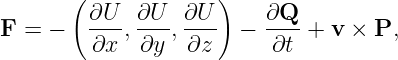

The potential momentum has more of an influence on the non-quantum world when the problem is two or three-dimensional or when the potential momentum is changing with time. The total force on a particle due to all possible effects involving the potential energy and the potential momentum is given by

| (14.12) |

where v is the particle velocity and P is a vector obtained from the potential momentum vector as follows:

| (14.13) |

This is unexpectedly complicated. However, equation (14.12) consists of three parts. The first part involves derivatives of the potential energy and is exactly the same as in the non-relativistic case. The new effects are confined to the second and third parts, -∂Q∕∂t and v × P. A full derivation of these equations involves rather complex mathematics. However, it is possible to understand the origin of these additional contributions to the force by looking at a couple of simple examples.

A matter wave impingent on a discontinuity in potential momentum is refracted, just as it is refracted by a discontinuity in potential energy. Refraction of a matter wave packet means that the velocity of the associated particle changes as it moves across the interface. This means that the particle undergoes an acceleration, implying that it is subject to a force.

As in the case of Snell’s law for optics, the frequency of a matter wave doesn’t change as it crosses such a discontinuity in potential momentum. Furthermore, neither does the component of the wave vector parallel to the discontinuity. These two conditions together ensure phase continuity at the interface.

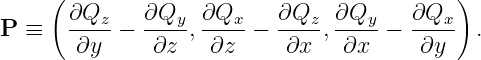

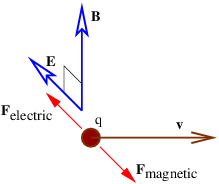

____________________________

Figure 14.2 shows an example of what happens when a wave encounters a series of parallel slabs with increasing values of Q. The y component of the wave vector doesn’t change as the wave crosses each of the interfaces between slabs, for reasons discussed above. Hence, Πy = ℏky doesn’t change either, which means that dΠy∕dx = 0. The y component of kinetic momentum, py = Πy - Qy, must therefore decrease as Qy increases, as illustrated in figure 14.2.

____________________________

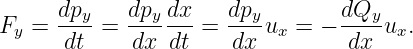

Newton’s second law tells us that the y component of the force on the particle associated with the wave is just the time derivative of the y component of the kinetic momentum:

| (14.14) |

In the last step of this equation we used the fact that dΠy∕dx = 0.

The x component of the force can be obtained by similar reasoning, using the additional information that the speed, and hence the magnitude of the kinetic momentum, p2 = p x2 + p y2, doesn’t change under the influence of the potential momentum:

| (14.15) |

Aside from assuming that p2 = constant, we have used the relationships px = (p2 - p y2)1∕2 and p y∕px = uy∕ux. Equations (14.14) and (14.15) constitute a special case of equations (14.12) and (14.13) which is valid when Q points in the y direction and is a function only of x.

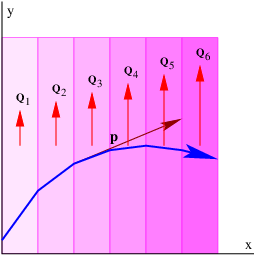

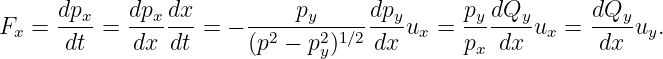

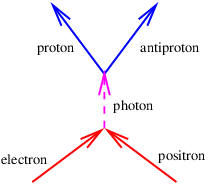

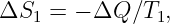

The necessity for the term -∂Q∕∂t in equation (14.12) is easily understood from the following argument, which is illustrated in figure 14.3. The example in the previous section showed that a particle moving in the +x direction with velocity ux through a field of increasing Qy (left panel of figure 14.3) experiences a force in the -y direction equal to Fy = -(dQy∕dx)ux. However, viewing this same process from a reference frame in which the particle is stationary (right panel of this figure), we see that the potential momentum at the position of the particle increases with time at the rate dQy∕dt. The particle is not moving in this reference frame, so the term v×P = 0. However, the stationary particle must still experience the above force in this reference frame in order to satisfy the principle of relativity.

Noting that dQy∕dt = (dQy∕dx)ux, we see that equation (14.12) provides this force via the term -∂Q∕∂t in the reference frame moving with the particle. Thus, the time derivative term in equation (14.12) is needed to maintain the principle of relativity; the same force occurs in the two different reference frames but originates from the term v × P in the original reference frame and the term -∂Q∕∂t in the frame moving with the particle.

Arguments similar to these were actually made by Einstein in his original 1905 paper on relativity.

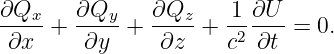

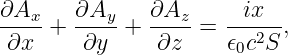

It turns out that the four components of the potential four-momentum are not independent, but are subject to the condition

| (14.16) |

This is called the Lorenz condition. The physical meaning of this condition will become clear when we study electromagnetism.3

The theory of potential momentum is only one of three ways in which the idea of potential energy can be extended to the relativistic case. This theory is called gauge theory for obscure historical reasons. Gauge theory is important because electromagnetism as well as the theories of weak and strong sub-nuclear interactions are all of this type.

____________________________

Gravity is the only fundamental force that does not take the form of a gauge theory. Instead, gravity takes the form of one of two other possible relativistic extensions of potential energy. This theory is called general relativity. The gravitational force in general relativity can be interpreted geometrically as a consequence of the curvature of spacetime. Mathematically, it is far too difficult to pursue here.

The third relativistic extension of potential energy considers potential energy to be a field which alters the rest energy of particles. High energy physicists believe that the elementary particles gain their mass by this mechanism. The field is called the Higgs field after the English physicist who first proposed this theory, Peter Higgs. The recent discovery of the Higgs boson at CERN’s Large Hadron Collider in Geneva, Switzerland supports this idea.

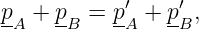

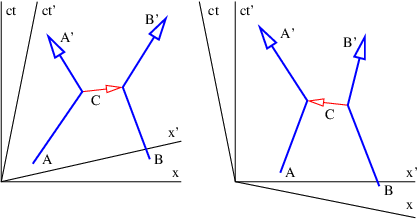

We earlier introduced the ideas of energy and momentum conservation. In other words, if we have a number of particles isolated from the rest of the universe, each with momentum pi and energy Ei, then particles may be created and destroyed and they may collide with each other.4 In these interactions the energy and momentum of each particle may change, but the sum total of all the energy and the sum total of all the momentum remains constant with time:

| (14.17) |

The expression is simpler in terms of four-momentum:

| (14.18) |

At this point a statement such as the one above should ring alarm bells. Just what does it mean to say that the total energy and momentum remain constant with time in the context of relativity? Which time? The time in which reference frame?

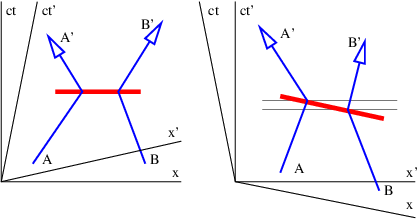

Figure 14.4 illustrates the problem. Suppose two particles exchange four-momentum remotely at the time indicated by the fat horizontal bar in the left panel of figure 14.4. Conservation of four-momentum implies that

| (14.19) |

where the subscripted letters correspond to the particle labels in figure 14.4. Primed values refer to the momentum after the exchange while no primes indicates values before the exchange.

Now view the exchange from the reference frame in the right panel of figure 14.4. A problem with four-momentum conservation exists in the region between the thin horizontal lines. In this region particle B has already transferred its four-momentum, but it has yet to be received by particle A. In other words, four-momentum is not conserved in this reference frame!

____________________________

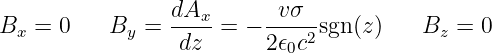

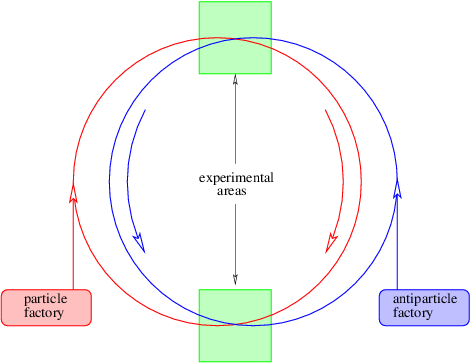

This problem is so serious that we must eliminate the concept of force at a distance from the repertoire of physics. The only way to have particles interact remotely and still conserve four-momentum in all reference frames is to assume that all remote interactions are mediated by another particle, as indicated in figure 14.5. In other words, momentum and energy are transferred from particle A to particle B in a two step process. First, particle A emits particle C in a manner which conserves the four-momentum. Second, particle C is absorbed by particle B in a similarly conservative interaction. Four-momentum is conserved at all times in all reference frames in this picture.

Another problem is evident from figure 14.5. As drawn, the velocity of the intermediary particle exceeds the speed of light. This is reflected in the fact that different reference frames yield contradictory results as to whether the intermediary particle moves from A to B or B to A. These difficulties turn out to be much less severe than those arising from non-locality. Let us address them in sequence.

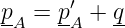

For sake of definiteness, let us view the emission of particle C by particle A in a reference frame in which the velocity of particle A is just reversed in the emission process. In this case the four-momentum before the emission is pA = (p,E∕c), where E = (p2c2 + m2c4)1∕2. After the emission we have p′A = (-p,E∕c). Conservation of four-momentum in the emission process requires that

| (14.20) |

where q is the four-momentum of particle C. From the above assumptions it is clear that

| (14.21) |

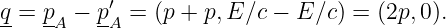

Suppose that the real, measured mass of particle C is mC. This conflicts with the apparent or virtual mass of this particle in its flight from A to B, which is

| (14.22) |

where q ≡|q| is the momentum transfer. Note that the apparent mass is imaginary because the four-momentum is spacelike.

Classically, this discrepancy in the apparent and actual masses of the particle C would simply indicate that the process wasn’t possible. However, recall that the uncertainty principle allows there to be an uncertainty in the mass if it doesn’t persist for too long in terms of the proper time interval along the particle’s world line. The statement of this law is ΔμΔτ ≈ 1. Expressed in terms of mass, this becomes

| (14.23) |

Let us convert the proper time to an interval since the world line of particle C is horizontal in the reference frame in which we are viewing it. Ignoring the factor of i, Δτ = ΔI∕c. We finally compute the absolute value of the mass discrepancy as follows: |mC -iq∕c| = [(mC -iq∕c)(mC + iq∕c)]1∕2 = (m C2 + q2∕c2)1∕2. Solving for I yields the approximate maximum invariant interval that particle C can move from its source point while keeping its erroneous mass hidden by the uncertainty principle:

| (14.24) |

A particle forced into having an apparent mass different from its actual mass is called a virtual particle. The interaction shown in figure 14.5 can only take place if particles A and B come closer to each other than the distance ΔI. This argument thus produces an estimate for the “range” of an interaction with momentum transfer 2p and intermediary particle mass mC.

Two distinct possibilities exist. If the intermediary particle is massless (a photon, for instance), then the range of the interaction is inversely related to the momentum transfer: ΔI ≈ℏ∕q. Thus, small momentum transfers can occur at large distances. An interaction of this type is called “long range”. On the other hand, if the intermediary particle has mass, the range is simply ΔI ≈ℏ∕mCc when q ≪ mCc. The range is thus constant and inversely proportional to the mass of the intermediary particle for low momentum transfers. For large momentum transfer, i. e., when q ≫ mCc, the range decreases from this value with increasing momentum transfer, as in the case of a massless intermediary particle.

According to quantum mechanics, particles are represented by waves. The absolute square of the wave amplitude represents the probability of finding the particle. In gauge theory the potential four-momentum performs this role for the virtual particles intermediary interactions. Thus a larger potential four-momentum at some point means a higher probability of finding the related virtual particles at that point.

Figure 14.5 illustrates another oddity in the role of intermediary particles in collisions. In the unprimed frame, particle C appears to be emitted by particle A and absorbed by particle B. In the primed frame the reverse is true; it appears to be emitted by B and absorbed by A. These judgements are based on the fact that the A vertex occurs earlier than the B vertex in the unprimed frame, while the B vertex occurs earlier in the primed frame. However, since these distinctions are based on time ordering in different reference frames of events separated by a spacelike interval, they are inherently not relativistically invariant. Since the principle of relativity states that physical laws are the same in all inertial reference frames, we have a conceptual problem to overcome.

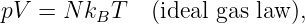

A related problem has to do with the computation of energy from mass and momentum. The solution of equation E2 = p2c2 + m2c4 for the energy has a sign ambiguity that we have so far ignored:

| (14.25) |

A natural tendency would be to omit the minus sign and just consider positive energies. However, this would be a mistake — experience with quantum mechanics indicates that both solutions must be considered.

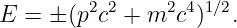

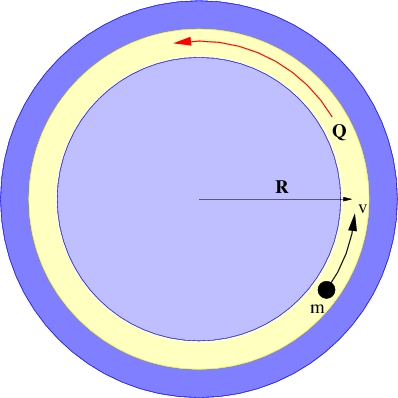

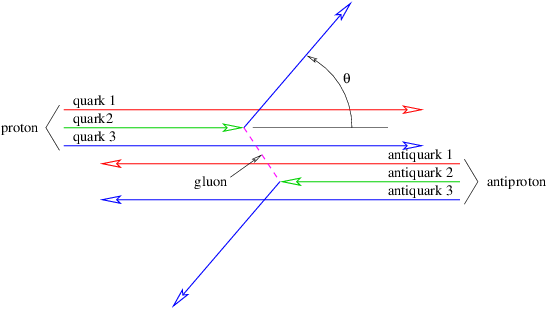

Richard Feynman won the Nobel Prize in physics largely for developing a consistent interpretation of the above negative energy solutions, which we now relate. Notice that the four-momentum points backward in time in a spacetime diagram if the energy is negative. Feynman suggested that a particle with four-momentum p is equivalent to the corresponding antiparticle with four-momentum -p. Thus, we interpret a particle with momentum p and energy E < 0 as an antiparticle with momentum -p and energy -E > 0.

Antiparticles are known to exist for all particles. If a particle and its antiparticle meet, they can annihilate, creating one or more other particles. Correspondingly, if energy is provided in the right form, a particle-antiparticle pair can be created.

____________________________

Suppose a particular kind of particle, call it an A particle, produces a B particle when it annihilates with its antiparticle A. This is illustrated in the left panel in figure 14.6. In Feynman’s view, this process is equivalent to the scattering of an A particle backward in time by a B particle, the scattering of an A backward in time by a B particle, the creation of an AA pair moving backward in time by a B particle (an antiB), and the emission of a B particle by an A particle moving forward in time.

The statement “moving backward in time” has stimulated generations of physics students to contemplate the possibility that Feynman’s picture makes time travel possible. As far as we know, this is not so. The key phrase is equivalent to. In other words, causality still works forward in time as we have come to expect.

The real utility of the “backward in time” picture is that it makes calculations easier, since processes which are normally thought of as being very different turn out to have the same mathematical form.

Returning to the ambiguity shown in figure 14.5, it turns out that it does not matter whether the picture in the left or right panel is chosen. According to the Feynman view the two processes are equivalent if one small correction is made — if the intermediary particle going from left to right is a C particle, then the intermediary particle going from right to left in the other picture is a C particle, or an antiC. It is immaterial whether the arrow representing either the C or the C points forward or backward in time. The key point is that if an arrow points into a vertex, the four-momentum of that particle contributes to the input side of the momentum-energy budget for that vertex. If an arrow points away from a vertex, then the four-momentum contributes to the output side.

If |H|≪ m and p2 ≪ m2c2, show how this equation may be approximated as

and determine the form of “something” in terms of H. Is this theory distinguishable from the theory involving potential energy at nonrelativistic velocities?

_____________________________________

_____________________________________

_____________________________________

Compute the group velocity of such a particle. Convert the result into an expression in terms of momentum rather than wavenumber. Compare this to the corresponding expression for a positive energy particle and relate it to Feynman’s explanation of negative energy states.

where q is the charge on the particle and ±mc2 is the rest energy, with the ± corresponding to positive and negative energy states. Assume that |qϕ|≪ mc2.

Hint: Recall that the total energy is always rest energy plus kinetic energy (zero in this case) plus potential energy.

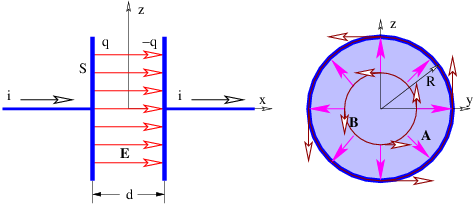

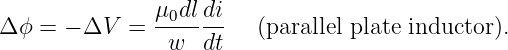

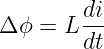

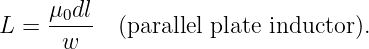

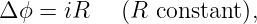

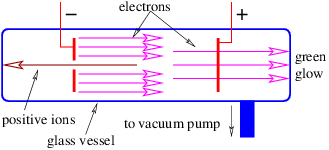

In this chapter we begin the study of electromagnetism. The forces on charged particles due to electromagnetic fields are introduced and related to the general case of force on a particle by a gauge field. The principles of electric motors and generators are then addressed as an example of such forces in action.

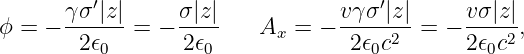

Electromagnetism is a gauge theory. Particles that have a property called electric charge are subject to forces exerted by the gauge fields of electromagnetism. The potential four-momentum Q = (Q,U∕c) of a particle with charge q in the presence of the electromagnetic four-potential a is just

| (15.1) |

In the simplest case the four-potential represents the amplitude for finding the intermediary particle associated with the electromagnetic gauge field. This particle has zero mass and is called the photon. If more than one photon is present, the interpretation of a becomes more complicated. This issue will be considered later.

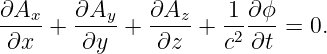

The four-potential has space and time components A and ϕ∕c such that a = (A,ϕ∕c). The quantity A is called the vector potential and ϕ is called the scalar potential. The scalar and vector potential are related to the potential energy U and potential momentum Q of a particle of charge q by

| (15.2) |

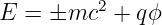

The Lorenz condition written in terms of A and ϕ is

| (15.3) |

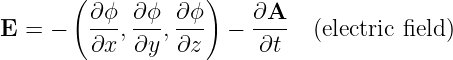

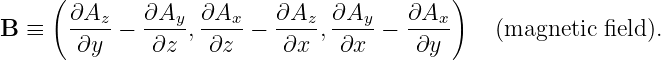

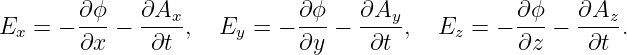

Electric and magnetic fields manifest themselves observationally by the forces that they cause. These vector quantities are related to the scalar and vector potentials as follows:

| (15.4) |

| (15.5) |

Note that arbitrary scalar and vector constants may be added respectively to ϕ and A without changing either the electric or magnetic fields, since the latter are functions only of space and time derivatives of the former. This is a simple example of the concept of gauge invariance in action. We will see later that not just a constant, but any time-independent vector function A′(x,y,z) may be added to A with similar null results, as long as ∂Ax′∕∂y = ∂Ay′∕∂x, etc. Gauge invariance is an important part of gauge theory, but a full understanding depends on more sophisticated mathematics than currently at our disposal.

By comparison of equations (15.4) and (15.5) with the general expression for force in gauge theory, we find that the electromagnetic force on a particle with charge q is

where v is the velocity of the particle and where we have used equations (15.2) and (15.4). For historical reasons this is called the Lorentz force.

We now explore some examples of the motion of charged particles under the influence of electric and magnetic fields.

Suppose a particle with charge q is exposed to a constant electric field Ex in the x direction. The x component of the force on the particle is thus Fx = qEx. From Newton’s second law the acceleration in the x direction is therefore ax = Fx∕m = qEx∕m where m is the mass of the particle. The behavior of the particle is the same as if it were exposed to a constant gravitational field equal to qEx∕m.

If ∂A∕∂t = 0, then the electric force on a charged particle is

| (15.7) |

This force is conservative, with potential energy U = qϕ. Recalling that the total energy, E = K + U, of a particle under the influence of a conservative force remains constant with time, we can infer that the change in the kinetic energy with position of the particle is just minus the change in the potential energy: ΔK = -ΔU. Notice in particular that if the particle returns to its initial position, the change in the potential energy is zero and the kinetic energy recovers its initial value.

If ∂A∕∂t≠0, then there is the possibility that the electric force is not conservative. Recall that the magnetic field is derived from A. Interestingly, a necessary and sufficient criterion for a non-conservative electric force is that the magnetic field be changing with time. This result was first inferred experimentally by the English physicist Michael Faraday in 1831 and at nearly the same time by the American physicist Joseph Henry. It will be further explored later in this chapter.

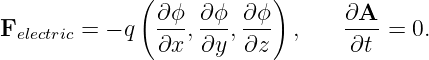

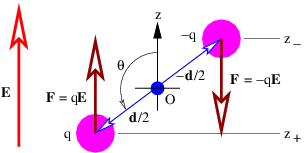

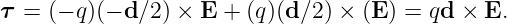

____________________________

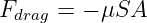

Let us now imagine a “dumbbell” consisting of positive and negative charges of equal magnitude q separated by a distance d, as shown in figure 15.1. If there is a uniform electric field E, the positive charge experiences a force qE, while the negative charge experiences a force -qE. The net force on the dumbbell is thus zero.

The torque acting on the dumbbell is not zero. The total torque acting about the origin in figure 15.1 is the sum of the torques acting on the two charges:

| (15.8) |

The vector d can be thought of as having a length equal to the distance between the two charges and a direction going from the negative to the positive charge.

The quantity p = qd is called the electric dipole moment. (Don’t confuse it with the momentum!) The torque is just

| (15.9) |

This shows that the torque depends on the dipole moment, or the product of the charge and the separation. Thus, halving the separation and doubling the charge results in the same dipole moment.

The tendency of the torque is to rotate the dipole so that the dipole moment p is parallel to the electric field E. The magnitude of the torque is given by

| (15.10) |

where the angle θ is defined in figure 15.1 and p = |p| is the magnitude of the electric dipole moment.

The potential energy of the dipole is computed as follows: The scalar potential associated with the electric field is ϕ = -Ez where E is the magnitude of the field, assumed to point in the +z direction. Thus, the potential energy of a single particle with charge q is U = qϕ = -qEz. The total potential energy of the dipole is the sum of the potential energies of the individual charges:

where z+ and z- are the z positions of the positive and negative charges. The equating of z+ - z- to d cos(θ) may be verified by examining the geometry of figure 15.1.The tendency of the electric field to align the dipole moment with itself is confirmed by the potential energy formula. The potential energy is lowest when the dipole moment is aligned with the field and highest when the two are anti-aligned.

____________________________

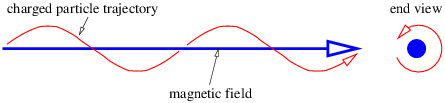

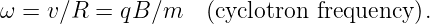

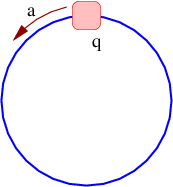

The magnetic force on a particle with charge q moving with velocity v is Fmagnetic = qv × B, where B is the magnetic field. The magnetic force is directed perpendicular to both the magnetic field and the particle’s velocity. Because of the latter point, no work is done on the particle by the magnetic field. Thus, by itself the magnetic force cannot change the magnitude of the particle’s velocity, though it can change its direction.

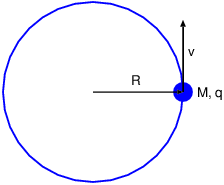

If the magnetic field is constant, the magnitude of the magnetic force on the particle is also constant and has the value Fmagnetic = qvB sin(θ) where v = |v|, B = |B|, and θ is the angle between v and B. If the initial velocity is perpendicular to the magnetic field, then sin(θ) = 1 and the force is just Fmagnetic = qvB. The particle simply moves in a circle with the magnetic force directed toward the center of the circle. This force divided by the mass m must equal the particle’s centripetal acceleration: v2∕R = a = F magnetic∕m = qvB∕m in the non-relativistic case, where R is the radius of the circle. Solving for R yields

| (15.12) |

The angular frequency of revolution is

| (15.13) |

Notice that this frequency is a constant independent of the radius of the particle’s orbit or its velocity. This is called the cyclotron frequency.

If the initial velocity is not perpendicular to the magnetic field, then the particle still has a circular component of motion in the plane normal to the field, but also drifts at constant speed in the direction of the field. The net result is a spiral motion in the direction of the magnetic field, as illustrated in figure 15.2. The radius of the circle is R = mvp∕(qB) in this case, where vp is the component of v perpendicular to the magnetic field.

____________________________

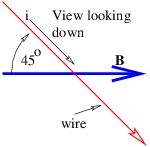

If we have perpendicular electric and magnetic fields as shown in figure 15.3, then it is possible for a charged particle to move such that the electric and magnetic forces simply cancel each other out. From the Lorentz force equation (15.6), the condition for this happening is E + v × B = 0. If E and B are perpendicular, then this equation requires v to point in the direction of E × B (i. e., normal to both vectors) with the magnitude v = |E|∕|B|. This, of course, is not the only possible motion under these circumstances, just the simplest.

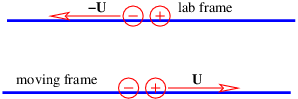

It is interesting to consider this situation from the point of view of a reference frame that is moving with the charged particle. In this reference frame the particle is stationary and therefore not subject to the magnetic force. Since the particle is not accelerating, the net force, which in this frame consists only of the electric force, is zero. Hence, the electric field must be zero in the moving reference frame.

____________________________

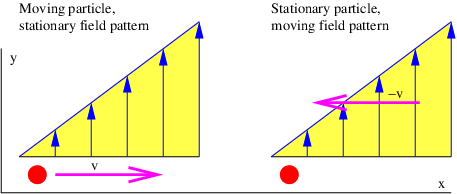

This argument shows that the electric field perceived in one reference frame is not necessarily the same as the electric field perceived in another frame. Figure 15.4 shows why this is so. The left panel shows the situation in the reference frame moving to the right, which is the unprimed frame in this picture. The charged particle is stationary in this reference frame. The four-potential is purely spacelike, having no time component ϕ∕c. Assuming that a is constant in time, there is no electric field, and hence no electric force. Since the particle is stationary in this frame, there is also no magnetic force. However, in the primed reference frame, which is moving to the left relative to the unprimed frame and therefore is equivalent to the original reference frame in which the particle is moving to the right, the four-potential has a time component, which means that a scalar potential and hence an electric field is present.

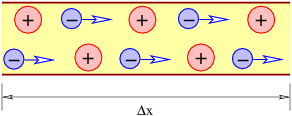

So far we have talked mainly about point charges moving in free space. However, many practical applications of electromagnetism have charges moving through a conductor such as copper. A conductor is a material in which electrically charged particles can freely move. An insulator is a material in which charged particles are fixed in place. Practical conductors are often surrounded by insulators in order to confine the motion of charge to particular paths.

The current through a wire is defined as the amount of charge passing through the wire per unit time. When defining current, one needs to decide which direction constitutes a positive current for the problem at hand, i. e., the direction in which the positive charge is moving. If the current consists of particles carrying negative charge, then the direction of the current is opposite the direction of the motion of the particles.

Metals tend to be good conductors, while glass, plastic, and other non-metallic materials are usually insulators. All materials contain both positive and negative charges. In metals, negatively charged electrons can escape from atoms and are free to move about the material. When atoms lose one or more electrons, they become positively charged. Atoms tend to be fixed in place. Since the electron charge is negative, the current in a wire actually has a direction opposite the direction of motion of the electrons, as noted above.

____________________________

If a conductor is in the form of a wire, we can compute the magnetic force on the wire if we know the number of mobile particles per unit length of wire N, the charge on each particle q, and the speed v with which they are moving down the wire. The total force on a length of wire L is F = qNLvn × B, where n is a unit vector pointing in the direction of motion of the particles through the wire. The quantity i ≡ qNv is called the current in the wire. It equals the amount of charge per unit time flowing down the wire. Written in terms of the current, the force on a length L of the wire is

| (15.14) |

____________________________

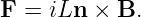

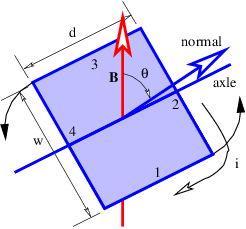

Figure 15.6 shows a rectangular loop of wire mounted on an axle in a magnetic field. A current i exists in the loop as shown. The currents in loop segments 2 and 4 experience a force parallel to the axle. These forces generate no net torque. However, the magnetic forces on loop segments 1 and 3 are each F = idB in magnitude, where B = |B| is the magnitude of the magnetic field. Together these forces generate a counterclockwise torque about the axle equal to τ = 2F(w∕2) sin(θ) = iwdB sin(θ). This can be represented in vector form as

| (15.15) |

where m is a vector with magnitude iwd and direction normal to the loop as shown in figure 15.6. The vector m is called the magnetic dipole moment.

The loop can actually be any shape, not just rectangular. In the general case the magnitude of the magnetic moment equals the current i times the area S of the loop:

| (15.16) |

In the above example the area is S = wd. The direction of m is determined by the right hand rule; curl the fingers on your right hand around the loop in the direction of the current and your thumb points in the direction of m.

In analogy with the electric dipole in an electric field, the potential energy of a magnetic dipole in a magnetic field is

| (15.17) |

Figure 15.6 illustrates the principle of an electric motor. A motor consists of multiple loops of wire on an axle carrying a current in a magnetic field. The torque on the axle turns the loops so that the magnetic moment is parallel to the field. The angular momentum of the loops carries the rotation of the axle through the zero torque region, which occurs when the magnetic moment is either perfectly parallel or perfectly anti-parallel (i. e., pointing in the opposite direction) to the field. At this point either the magnetic field is reversed by some mechanism or the magnetic dipole is reversed by making the current circulate around the loops in the opposite direction. The torque due to the magnetic force then turns the axle through another half-turn, whereupon the field or the magnetic moment is again reversed, and so on.

As was shown earlier, the electric field is derived from two different sources, spatial derivatives of the scalar potential and time derivatives of the vector potential:

| (15.18) |

In time independent situations the vector potential part drops out and we are left with a dependence only on the scalar potential. In this case a particle with charge q has an electrostatic potential energy U = qϕ, which means that the electric force is conservative. However, in the time dependent situation there is no guarantee that the part of the electric field derived from the vector potential will be conservative.

____________________________

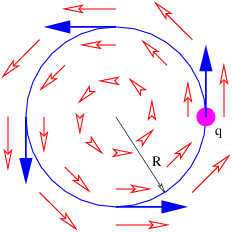

An example of a non-conservative electric field occurs when we have

| (15.19) |

where C is a constant. In this case the electric and magnetic fields are

| (15.20) |

The magnetic field points in the -z direction and increases in magnitude with time. The electric field vectors are shown in figure 15.7. Notice that a positively charged particle moving in a counterclockwise circle as shown is continually being accelerated in the direction of motion, and is therefore continually gaining energy. This is impossible with a conservative force.

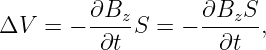

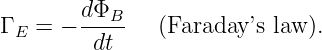

How much energy is gained by a particle with charge q moving in a complete circle of radius R under the above circumstances? The magnitude of the electric field at this radius is E = CR, so the force on the particle is F = qCR. The circumference of the circle is 2πR, so the total work done by the electric field in one revolution is just ΔW = 2πRF = 2πqCR2 = 2qCS, where S = πR2 is the area of the circle. Let us define ΔV = ΔW∕q = 2CS. For historical reasons this is called the electromotive force or EMF. This is deceptive terminology, because in fact ΔV doesn’t have the dimensions of force — it is really just the work per unit charge done on a particle making a single loop around the circle in figure 15.7.

Recall that the z component of the magnetic field in this case is Bz = -2Ct. Note that the time derivative of the magnetic field is just ∂Bz∕∂t = -2C. Comparison with the equation for electromotive force shows us that

| (15.21) |

where the area is brought inside the time derivative since it is constant in time.

____________________________

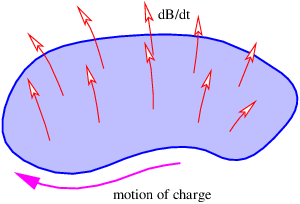

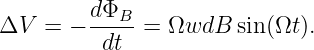

Notice that the argument of the time derivative in the above equation is the component of B perpendicular to the plane of the loop. The loop area multiplied by the normal component of B is the magnetic flux through the loop: ΦB ≡ BnormalS. The generalization of equation (15.21) for any loop fixed in space is expressed as

| (15.22) |

It is valid for arbitrary loop and magnetic field configurations, not just for the simple case we have been investigating.

The minus sign in equation (15.22) means the following: If the fingers on your right hand curl around the loop in the direction opposite to the direction that causes a positive charge to gain energy, then your thumb points in the direction of the time rate of change of the magnetic flux passing through the loop. This is illustrated in figure 15.8.

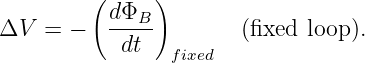

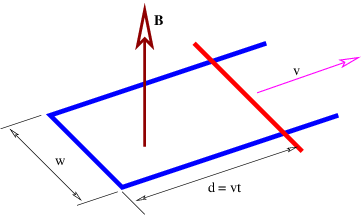

If a loop is changing size or shape with time, then the magnetic force also acts on charges in the loop. The work per unit charge done in a segment j of the loop defined by the displacement vector Δlj is

| (15.23) |

where vj is the velocity with which the loop segment is moving and Bj is the magnetic field at the location of the segment. To get the total EMF around the loop due to the magnetic force, the ΔV j values from each segment must simply be summed up and added to the right side of equation (15.22):

| (15.24) |

It turns out that the part of ΔV resulting from a moving or deforming loop equals minus the time rate of change of magnetic flux through the loop due solely to loop movement and deformation. Therefore, we can rewrite equation (15.24) in the more compact form

| (15.25) |

where the time derivative of the magnetic flux now includes both changes in the magnetic field and changes in the position, shape, and orientation of the loop. This is called Faraday’s law, even though it is a hybrid of equation (15.22), which is Faraday’s law as generally defined in advanced physics and the Lorentz force law as expressed in equation (15.23).

____________________________

The electric generator is perhaps the best known application of Faraday’s law. Figure 15.9 shows a rectangular loop of wire fixed to an axle that rotates at an angular rate Ω. The magnetic flux through the loop thus varies with time according to ΦB = wdB cos(θ) = wdB cos(Ωt). The EMF around the loop is thus

| (15.26) |

In a real generator there are many loops forming a coil of wire and the ends of the coil are brought out through the axle so that the resulting current can be tapped for practical use.

The EMF ΔV has the same units as the scalar potential ϕ. What is the difference between the two quantities? Both are related to the work done per unit charge by the electric field on a particle moving through the field. However, recall that the electric field is composed of two parts:

| (15.27) |

Δϕ = ϕ2 - ϕ1 is minus the work done on the particle in going from point 1 to point 2 by the part of the electric field associated with the scalar potential; moving to a lower potential results in a release of kinetic energy according to the conservation of energy. On the other hand, ΔV is (plus) the work done per unit charge on a particle by the part of the electric field associated with the time derivative of the vector potential.

Aside from the different sign conventions, there is one other fundamental difference between the two quantities: Δϕ is always zero for closed paths, i. e., paths in which the particle returns to its initial point. This is because point 1 is then the same as point 2, so ϕ1 = ϕ2. This condition doesn’t necessarily apply to the EMF. ΔV often is non-zero for closed particle paths. The electric generator that we have just discussed is an important case in point. The total work done per unit charge by the electric field on a charged particle moving along some path is thus ΔV - Δϕ. The Δϕ term drops out if the path is closed.

_____________________________________

_____________________________________

_____________________________________

_____________________________________

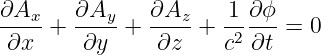

In this chapter we investigate how charge produces electric and magnetic fields. We first introduce Coulomb’s law, which is the basis for everything else in the section. We then discuss Gauss’s law for the electric and magnetic field, drawing on what we learned while using it on the gravitational field. Coulomb’s law and the theory of relativity together show that magnetic fields are generated by moving charge. We then use this fact to compute the magnetic fields from some simple charge distributions. We finish with a discussion of electromagnetic waves.

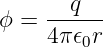

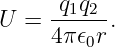

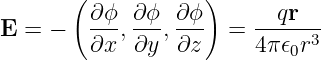

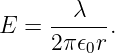

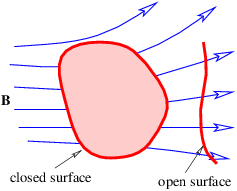

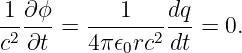

A stationary point electric charge q is known to produce a scalar potential

| (16.1) |

a distance r from the charge. The constant ϵ0 = 8.85 × 10-12 C2 N-1 m-2 is called the permittivity of free space. The vector potential produced by a stationary charge is zero.

The potential energy between two stationary charges is equal to the scalar potential produced by one charge multiplied by the value of the other charge:

| (16.2) |

Notice that it doesn’t make any difference whether one multiplies the scalar potential from charge 1 by charge 2 or vice versa – the result is the same.

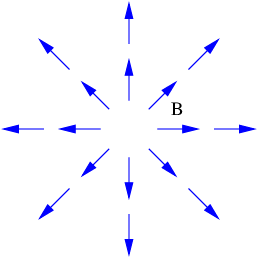

Since r = (x2 + y2 + z2)1∕2, the electric field produced by a charge is

| (16.3) |

where r = (x,y,z) is the vector from the charge to the point where the electric field is being measured. The magnetic field is zero since the vector potential is zero.

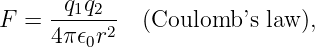

The force between two stationary charges separated by a distance r is the value of one charge multiplied by the electric field produced by the other charge. Thus the magnitude of the force is

| (16.4) |

with the force being repulsive if the charges are of the same sign, and attractive if the signs are opposite. This is called Coulomb’s law.

Equation (16.4) is the electric equivalent of Newton’s universal law of gravitation. Replacing mass by charge and G by -1∕(4πϵ0) in the equation for the gravitational force between two point masses gives us equation (16.4). The most important aspect of this result is that both the gravitational and electrostatic forces decrease as the square of the distance between the particles.

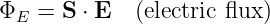

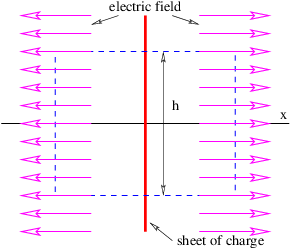

The electric flux is defined in analogy to the gravitational flux as

| (16.5) |

where S is the directed area through which the flux passes. (This is strictly true only for small, flat areas S over which the component of E normal to S can be assumed constant.) Since the electric field obeys an inverse square law, Gauss’s law applies to the electric flux ΦE just as it applies to the gravitational flux. In particular, since the magnitude of the outward electric field a distance r from a charge q is E = q∕(4πϵ0r2), the electric flux through a sphere of radius r (and area 4πr2) concentric with the charge is ES = [q∕(4πϵ 0r2)] × (4πr2) = q∕ϵ 0. This generalizes to an arbitrary distribution of charge as in the gravitational case:

| (16.6) |

where ΦE in this equation is the outward electric flux through a closed surface and qinside is the net charge inside this surface. This is an expression of Gauss’s law for the electric field. Since Gauss’s law for electricity and for gravitation are so similar, we can use all our insights from studying gravity on the electric field case.

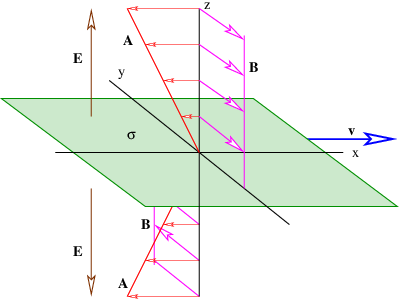

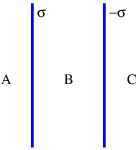

____________________________

Figure 16.1 shows how to set up the Gaussian surface to obtain the electric field emanating from an infinite sheet of charge. We assume a charge density of σ Coulombs per square meter, which means that the amount of charge inside the box is qinside = σhd, where the box has height h and depth d into the page. The total electric flux out of the left and right faces of the box is ΦE = 2Ehd, where E is the magnitude of the electric field on these surfaces. The field is assumed to point away from the charge, and hence out of the box on both faces. Due to the assumed direction of the electric field, there is no electric flux out of any of the other faces of the box.

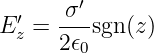

Applying Gauss’s law, we infer that 2Ehd = σhd∕ϵ0, which means that the electric field emanating from a sheet of charge with charge density per unit area σ is

| (16.7) |

The scalar potential associated with this electric field is easily obtained by realizing that equation (16.7) gives the x component of this field — the other components are zero. Using E = -∂ϕ∕∂x, we infer that

| (16.8) |

The absolute value signs around x take account of the fact that the direction of the electric field for negative x is opposite that for positive x.

____________________________

Similar reasoning is used to obtain the electric field due to a line of charge. A sketch of the expected electric field vectors and a Gaussian cylinder coaxial with the line of charge is shown in figure 16.2. If the charge per unit length is λ, the amount of charge inside the cylinder is qinside = λd, where d is the length of the cylinder. The outward electric flux at radius r is ΦE = 2πrdE. Gauss’s law therefore tells us that the electric field at radius r is just

| (16.9) |

In this case E = -∂ϕ∕∂r, so that the scalar potential is

| (16.10) |

____________________________

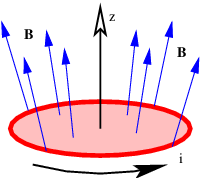

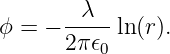

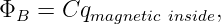

By analogy with Gauss’s law for the electric field, we could write a Gauss’s law for the magnetic field as follows:

| (16.11) |

where ΦB is the outward magnetic flux through a closed surface, C is a constant, and qmagnetic inside is the “magnetic charge” inside the closed surface. Extensive searches have been made for magnetic charge, generally called a magnetic monopole. However, none has ever been found. Thus, Gauss’s law for magnetism can be written

| (16.12) |

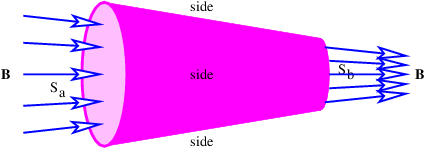

This of course doesn’t preclude non-zero values of the magnetic flux through open surfaces, as illustrated in figure 16.3.

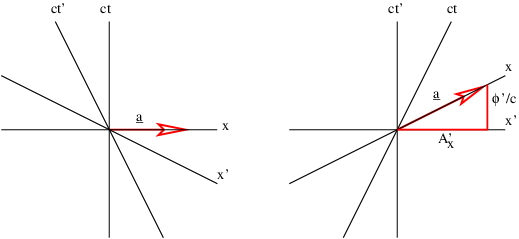

The equation (16.1) for the scalar potential of a point charge is valid only in the reference frame in which the charge q is stationary. By symmetry, the vector potential must be zero. Since ϕ is actually the timelike component of the four-potential, we infer that the four-potential due to a charge is tangent to the world line of the charged particle.

A consequence of the above argument is that a moving charge produces a magnetic field, since the four-potential must have spacelike components in this case.

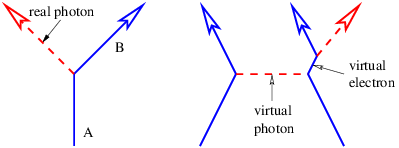

We have shown that electric charge generates both electric and magnetic fields, but the latter result only from moving charge. If we have the scalar potential due to a static configuration of charge, we can use this result to find the magnetic field if this charge is set in motion. Since the four-potential is tangent to the particle’s world line, and hence is parallel to the time axis in the reference frame in which the charged particle is stationary, we know how to resolve the space and time components of the four-potential in the reference frame in which the charge is moving.

____________________________

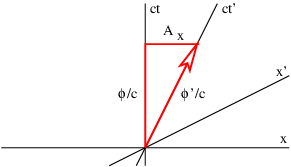

Figure 16.4 illustrates this process. For a particle moving in the +x direction at speed v, the slope of the time axis in the primed frame is just c∕v. The four-potential vector has this same slope, which means that the space and time components of the four-potential must now appear as shown in figure 16.4. If the scalar potential in the primed frame is ϕ′, then in the unprimed frame it is ϕ, and the x component of the vector potential is Ax. Using the spacetime Pythagorean theorem, ϕ′2∕c2 = ϕ2∕c2 - A x2, and relating slope of the ct′ axis to the components of the four-potential, c∕v = (ϕ∕c)∕Ax, it is possible to show that

| (16.13) |

where

| (16.14) |

Thus, the principles of special relativity allow us to obtain the full four-potential for a moving configuration of charge if the scalar potential is known for the charge when it is stationary. From this we can derive the electric and magnetic fields for the moving charge.

____________________________

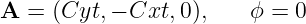

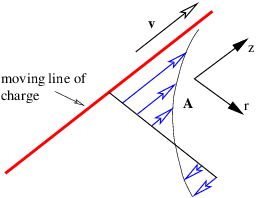

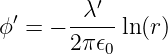

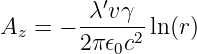

As an example of this procedure, let us see if we can determine the magnetic field from a line of charge with linear charge density in its own rest frame of λ′, aligned along the z axis. The line of charge is moving in a direction parallel to itself. From equation (16.10) we see that the scalar potential a distance r from the z axis is

| (16.15) |

in a reference frame moving with the charge. The z component of the vector potential in the stationary frame is therefore

| (16.16) |

by equation (16.13), with all other components being zero. This is illustrated in figure 16.5.

____________________________

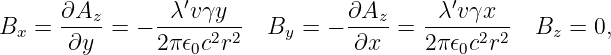

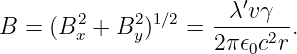

We infer that

| (16.17) |

where we have used r2 = x2 + y2. The resulting field is illustrated in figure 16.6. The field lines circle around the line of moving charge and the magnitude of the magnetic field is

| (16.18) |

There is an interesting relativistic effect on the charge density λ′, which is defined in the co-moving or primed reference frame. In the unprimed frame the charges are moving at speed v and therefore undergo a Lorentz contraction in the z direction. This decreases the charge spacing by a factor of γ and therefore increases the charge density as perceived in the unprimed frame to a value λ = γλ′.

We also define a new constant μ0 ≡ 1∕(ϵ0c2). This is called the permeability of free space. This constant has the assigned value μ0 = 4π × 10-7 N s2 C-2. The value of ϵ0 = 1∕(μ0c2) is actually derived from this assigned value and the measured value of the speed of light. The reasons for this particular way of dealing with the constants of electromagetism are obscure, but have to do with making it easy to relate the values of constants to the experiments used in determining them.

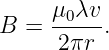

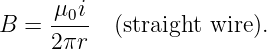

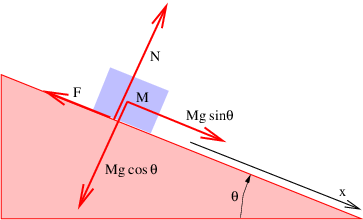

With the above substitutions, the magnetic field equation becomes

| (16.19) |

The combination λv is called the current and is symbolized by i. The current is the charge per unit time passing a point and is a fundamental quantity in electric circuits. The magnetic field written in terms of the current flowing along the z axis is

| (16.20) |

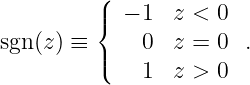

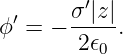

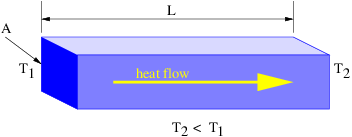

As another example we consider a uniform infinite sheet of charge in the x - y plane with charge density σ′. The charge is moving in the +x direction with speed v. As we showed in the section on Gauss’s law for electricity, the electric field for this sheet of charge in the co-moving reference frame is in the z direction and has the value

| (16.21) |

where we define

| (16.22) |

The sgn(z) function is used to indicate that the electric field points upward above the sheet of charge and downward below it (see figure 16.7).

The scalar potential in this frame is

| (16.23) |

____________________________

In the stationary reference frame in which the sheet of charge is moving in the x direction, the scalar potential and the x component of the vector potential are

| (16.24) |

according to equation (16.13), where σ = γσ′ is the charge density in the stationary frame. The other components of the vector potential are zero. We calculate the magnetic field as

| (16.25) |

where sgn(z) is defined as before. The vector potential and the magnetic field are shown in figure 16.7. Note that the magnetic field points normal to the direction of motion of the charge but parallel to the sheet. It points in opposite directions on opposite sides of the sheet of charge.

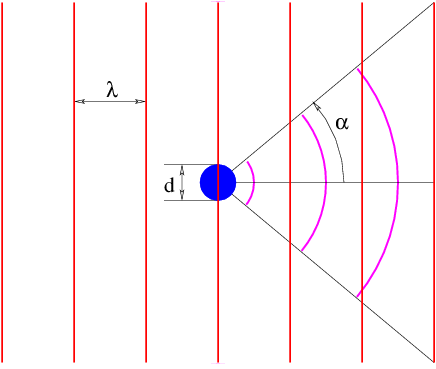

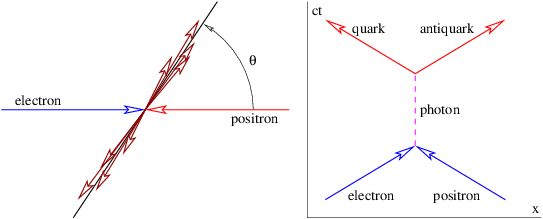

We have found so far that stationary charge produces an electric field while moving charge produces a magnetic field. It turns out that accelerated charge produces electromagnetic radiation. Electromagnetic radiation is nothing more than one or more photons that have zero mass, and are therefore real, not virtual.

____________________________

Acceleration of a charged particle is needed to produce radiation because of the conservation of energy and momentum. The left panel of figure 16.8 shows why. Since a photon carries off energy and momentum, conservation means that the energy and momentum of the emitting particle change due to the emission of a photon. This corresponds in classical mechanics to an acceleration.

The process in the left panel of figure 16.8 actually cannot occur if particles A and B have the same mass. If the mass of the outgoing particle B is less than the mass of the incoming particle A, then this reaction can and does occur. An example is the decay of an atom from a higher energy state to a lower energy state (and hence lower mass), accompanied by the emission of a photon.

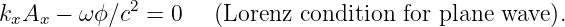

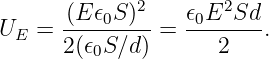

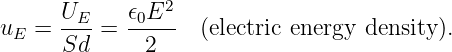

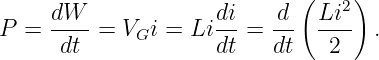

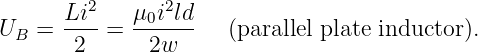

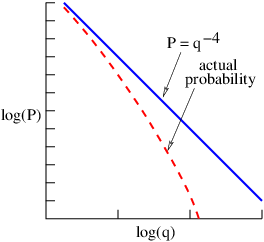

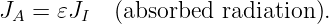

Another type of reaction that can generate radiation occurs when two charged particles (say, electrons) collide, as illustrated in the right panel of figure 16.8. In an elastic collision both electrons are real both before and after the photon transfer. However, it is possible for one of the electrons to have a virtual mass that is greater than the normal electron mass after the collision, which means that it is free to decay to a real electron plus a real photon.